In the age of social media, false information spreads fast. Emotions and personal belief often carry more weight than facts, especially online. New research explores how misinformation, content people share without knowing it’s wrong, spreads and how to stop it. A research team studied several high-profile cases, including the COVID-19 pandemic, the last US election, and the debate around climate change.

Prof. Gabriele Lenzini, the head of IRiSC research group at the University of Luxembourg’s SnT, has a computer science background and studies how the human element can impact technical systems, particularly cybersecurity. He warns against oversimplifying the problem. “We must resist the temptation to judge posts as simply true or false,” he says. Treating it as a binary question makes it too easy to hand the problem over to AI tools, which isn’t the answer. Instead, the focus is on helping people think critically and engage in civil debate.

Over the past four years, his team has looked at two things: how false content gets debunked online, and how fake followers and engagement are bought and sold.

Fact-checking can backfire

Correcting false information online sounds simple. But it often makes things worse. The REMEDIS project, launched in 2022, looked at health misinformation from three angles: data science, human-computer interaction, and regulation. One of its key findings is that fact-checking often does more harm than good. Professional fact-checks can feel politically biased to many users. Rather than correcting false beliefs, they tend to trigger defensiveness and conflict.

In 2021, X (formerly Twitter) tried a different approach with Community Notes. Instead of relying on hired fact-checkers, the tool lets X’s users fact-check each other’s posts. An algorithm identifies when enough users agree, and the note then appears on the original post. In 2025, Meta began testing a similar system on Facebook, Instagram, and Threads.

“Community Notes is a promising way to fact-check misleading content at scale,” says Dr. Yuwei Chuai, computational social scientist. The tool can slow the spread of false posts and prompt authors to delete them, but it still tends to appear too late to stop the initial surge of shares.

The underlying challenge runs deeper than timing. “No one likes being told they’re wrong,” says Dr. Anastasia Sergeeva, specialist in cybersecurity psychology and social technical systems. That resistance was made worse by low levels of trust in the media during the pandemic. Misinformation also rarely fits neatly into true or false categories. It can grow from personal opinion, anecdotal experience, or misread data, none of which lend themselves to straightforward correction after the fact.

To address this, the REMEDIS team built a serious game called Debunked. Set in a crisis scenario, the game asks players to decide whether to spread or counter false information. It draws on inoculation theory. Just as a vaccine builds immunity by exposing the body to a mild version of a virus, the game builds resistance to manipulation by letting players spot it in a safe setting. The goal is to strengthen critical thinking before misinformation takes hold.

Left to right: Huiyun Tang, Yuwei Chuai, Daphné Chapellier, Jean De Meyere, Alain Strowel, Anastasia Sergeeva, Luc Desaunettes-Barbero

Mapping the market for fake engagement

While REMEDIS focuses on the content of misinformation, the FAMOUS project looks at the economic system behind disinformation. Unlike misinformation, disinformation is false information that is spread deliberately to deceive. Launched in October 2024 in collaboration with the University of Newcastle, the project mapped a large European market for fake engagement services.

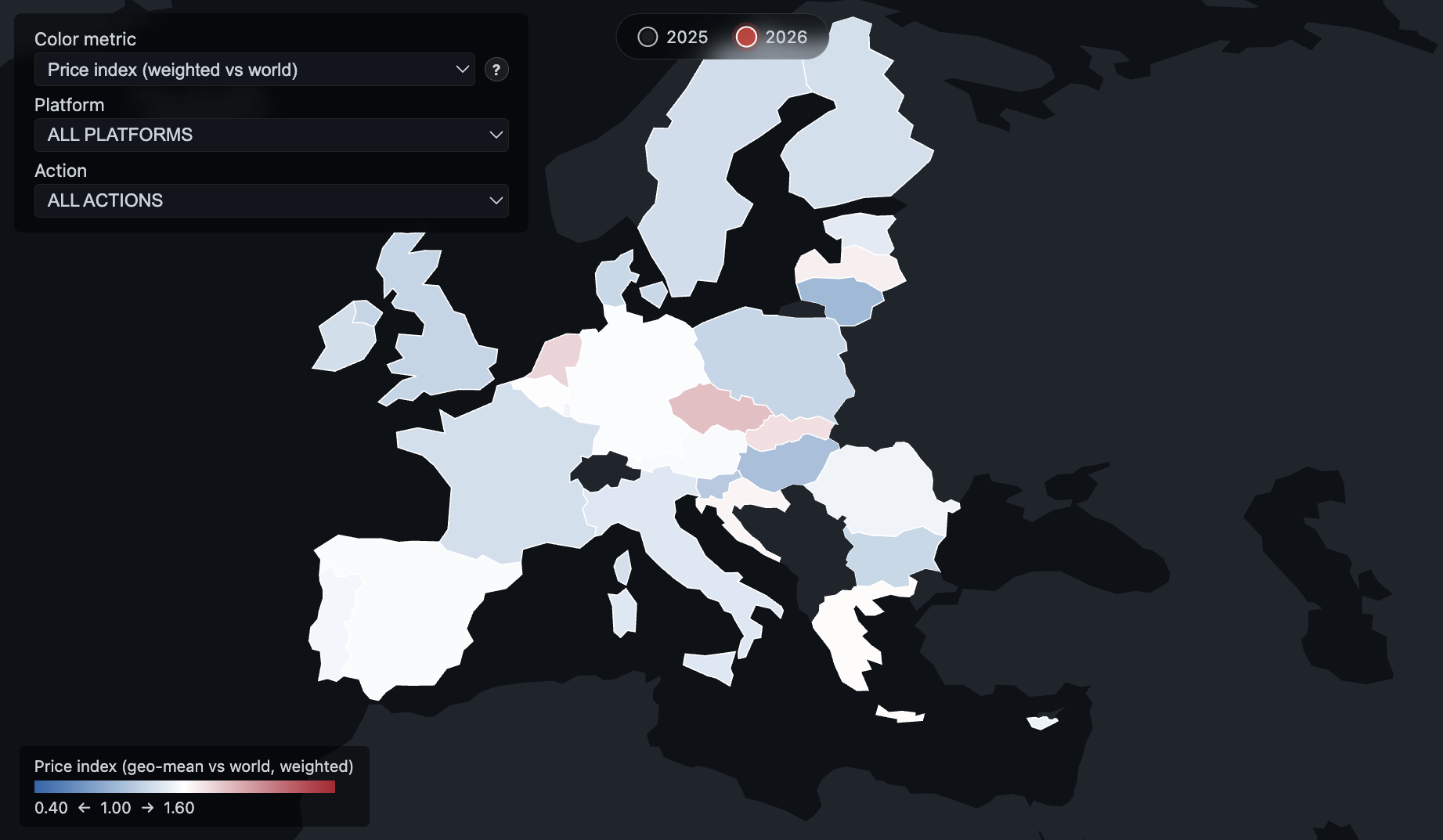

The researchers identified more than 2,000 websites selling fake accounts, followers, reviews, and likes. They published the findings as a publicly accessible interactive map. The next phase of the project will examine why marketing professionals and influencers buy fake engagement in the first place.

“Buying fake followers, clicks, and likes undermines the entire digital value chain,” says Dr. Sviatlana Höhn, a specialist in conversational AI and UX design, and linguistics. Advertising platforms gain nothing when bots interact with bots, and companies pay for impressions that never lead to real results. Yet the market keeps growing, which points to deeper questions about incentives and how the space is regulated.

Why working across disciplines matters

Both projects bring together computer scientists, data scientists, legal scholars, social scientists, and psychologists. That mix reflects how complicated the problem really is. FAMOUS, for example, includes a legal research track that analyses current legislation and looks for gaps in how fake engagement is regulated.

Technology itself shapes how misinformation spreads. Social media algorithms are built to show users content they’re likely to engage with, which reinforces echo chambers and pushes people towards more extreme views.

But technology alone can’t fix what is also a human problem. Understanding why people share false information, how they react to being corrected, and what motivates them to buy fake followers requires insights that go beyond computer science. That’s where social scientists and psychologists come in. Legal scholars, meanwhile, help identify where regulation is missing or failing.

Lenzini, who also serves as vice-chair of the University of Luxembourg’s Ethics Review Panel, argues that no single field can solve this alone. Regulators need a better grasp of digital technology. Computer scientists need to factor in human emotions, social behaviour, and legal constraints. Only by working together can researchers and policymakers build responses that actually work.

This research was supported by Luxembourg’s FNR, Belgium’s FNRS, and the EU Media and Information Fund (EMIT).

People in this story

About IRiSC

The IRiSC research group at SnT Combines social sciences and legal compliance into computer science to develop secure solutions.

Reliable, secure, and trustworthy systems have no bugs or design flaws, are easy to use, and compliant with standards. IRiSC recognises this complexity and integrates methods from social sciences and legal compliance into computer science to conduct research on sociotechnical cybersecurity.

About REMEDIS

REgulatory and other solutions to MitigatE online DISinformation (REMEDIS) is an interdisciplinary project combining research(ers) in digital law, social science, information and communication, history, and computer science. It aims to provide innovative regulatory frameworks and legally compliant socio-technical solutions to monitor online disinformation and its effects.

About FAMOUS

Fake Activity Market Observation system of Unethical Services (FAMOUS) seeks to expose and reduce the prevalence of fake online activities in Europe, such as influencer marketing fraud, phishing scams, and counterfeit product sales. By combining advanced technologies, including large language models and cybersecurity tools, the project map and monitor Fake Activity Shops (FAS) operating across European countries. This interdisciplinary effort involves collaboration among social media platforms, regulatory bodies, academics, and the general public. The goal is to offer new tools, methodologies, and policy recommendations to protect online spaces and improve trust in digital ecosystems.